在linux服务器本地部署Deepseek及在mac远程web-ui访问的操作

1. 在Linux服务器上部署DeepSeek模型 要在 Linux 上通过 Ollama 安装和使用模型,您可以按照以下步骤进行操作: 步骤 1:安装 Ollama 安装 Ollama: 使用以下命令安装 Ollama: 1 curl -sSfL https://ollama.com/

1. 在Linux服务器上部署DeepSeek模型要在 Linux 上通过 Ollama 安装和使用模型,您可以按照以下步骤进行操作: 步骤 1:安装 Ollama安装 Ollama:

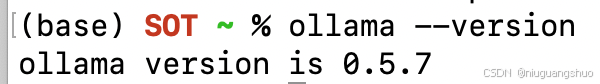

验证安装:

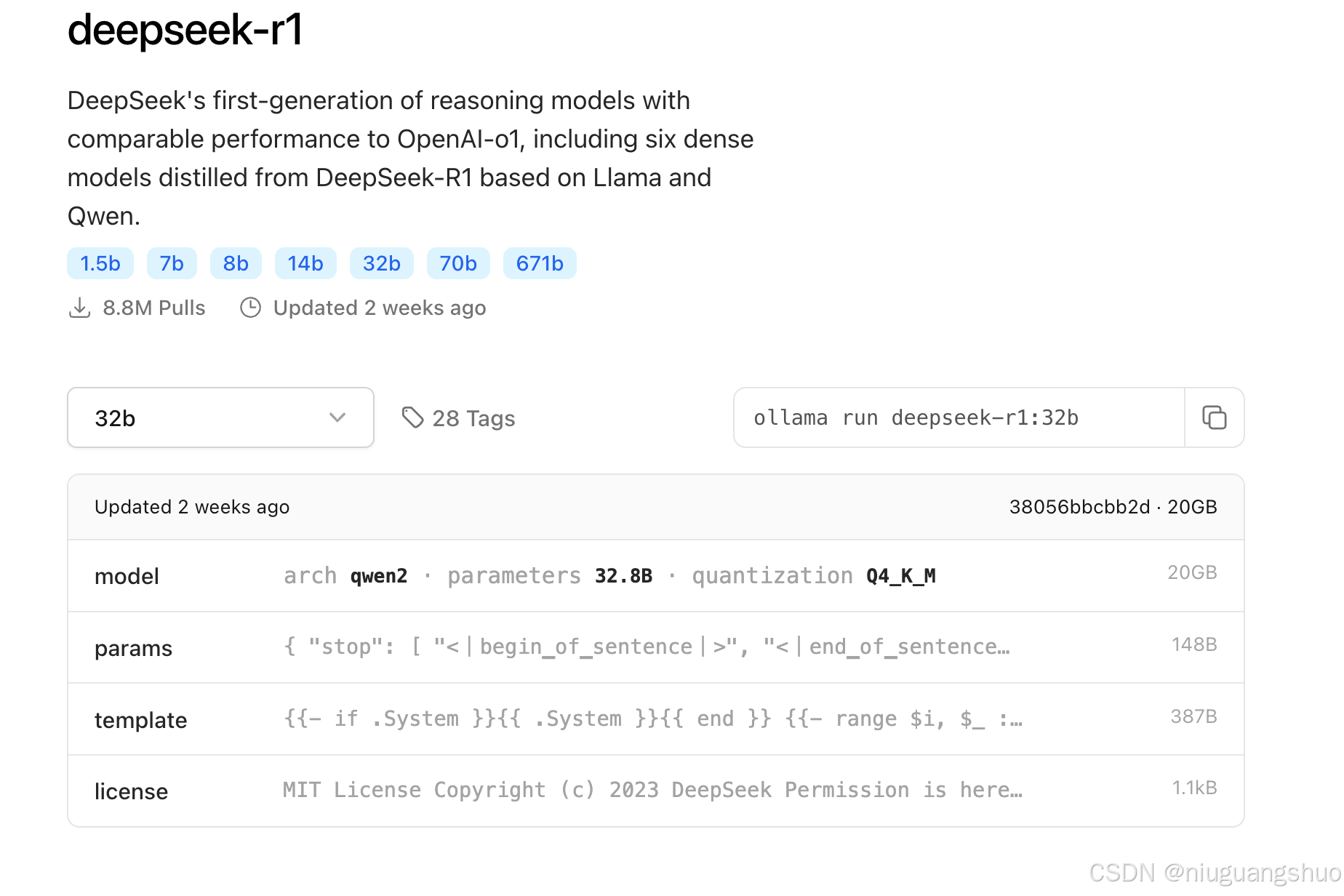

步骤 2:下载模型

这将下载并启动DeepSeek R1 32B模型。

DeepSeek R1 蒸馏模型列表

RTX 4090 显卡显存为 24GB,32B 模型在 4-bit 量化下约需 22GB 显存,适合该硬件。32B 模型在推理基准测试中表现优异,接近 70B 模型的推理能力,但对硬件资源需求更低。 步骤 3:运行模型

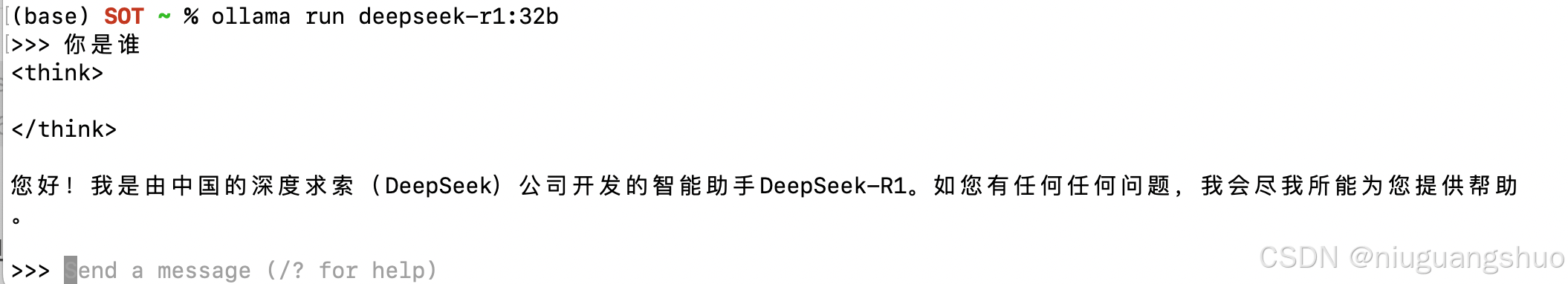

通过上面的步骤,已经可以直接在 Linux服务器通过命令行的形式使用Deepseek了。但是不够友好,下面介绍更方便的形式。 2. 在linux服务器配置Ollama服务1. 设置Ollama服务配置设置OLLAMA_HOST=0.0.0.0环境变量,这使得Ollama服务能够监听所有网络接口,从而允许远程访问。

2. 重新加载并重启Ollama服务

3.验证Ollama服务是否正常运行运行以下命令,确保Ollama服务正在监听所有网络接口:

您应该看到类似以下的输出,表明Ollama服务正在监听所有网络接口(0.0.0.0):

4. 配置防火墙以允许远程访问为了确保您的Linux服务器允许从外部访问Ollama服务,您需要配置防火墙以允许通过端口11434的流量。

5. 验证防火墙规则确保防火墙规则已正确添加,并且端口11434已开放。您可以使用以下命令检查防火墙状态:

6. 测试远程访问在完成上述配置后,您可以通过远程设备(如Mac)测试对Ollama服务的访问。

显示

测试问答

显示

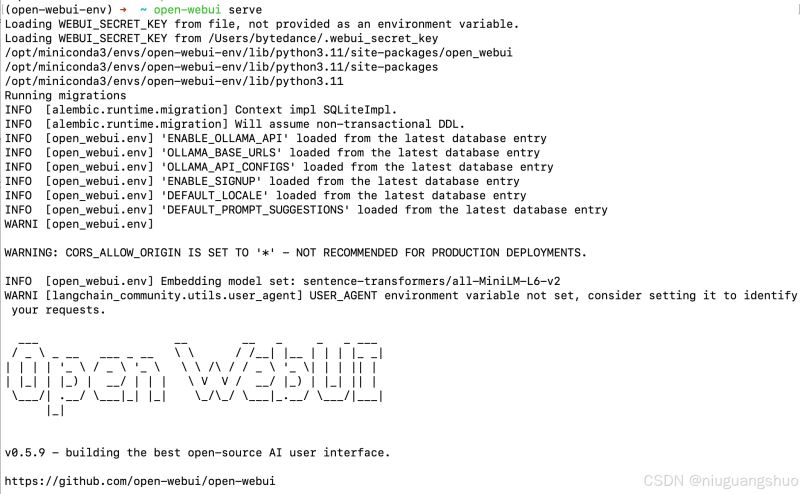

通过上述步骤,已经成功在Linux服务器上配置了Ollama服务,并通过Mac远程访问了DeepSeek模型。接下来,将介绍如何在Mac上安装Web UI,以便更方便地与模型进行交互。 3. 在Mac上安装Web UI为了更方便地与远程Linux服务器上的DeepSeek模型进行交互,可以在Mac上安装一个Web UI工具。这里我们推荐使用 Open Web UI,它是一个基于Web的界面,支持多种AI模型,包括Ollama。 1. 通过conda安装open-webui打开终端,运行以下命令创建一个新的conda环境,并指定Python版本为3.11:

2. 启动open-webui

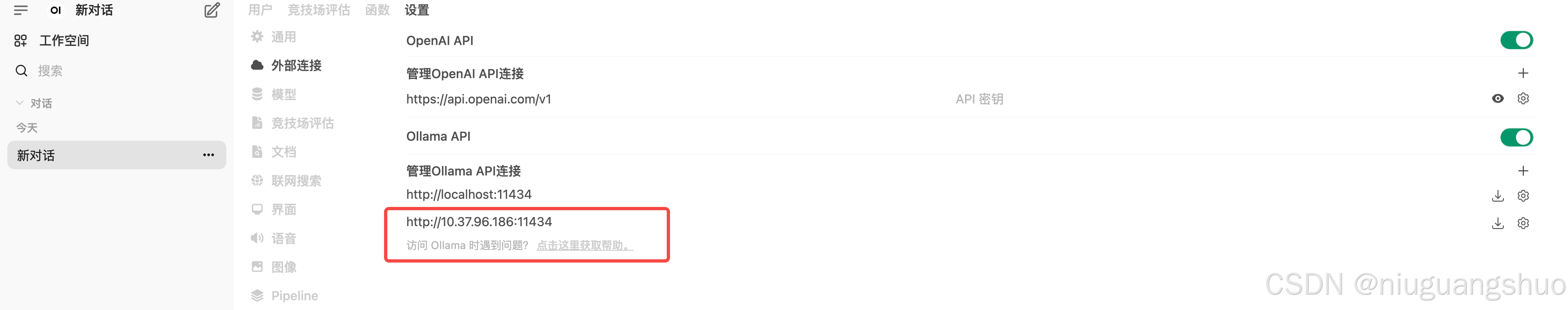

3. 浏览器访问http://localhost:8080/ 使用管理员身份(第一个注册用户)登录 在Open webui界面中,依次点击“展开左侧栏”(左上角三道杠)–>“头像”(左下角)–>管理员面板–>设置(上侧)–>外部连接 在外部连接的Ollama API一栏将switch开关打开,在栏中填上http://10.37.96.186:11434(这是我的服务器地址) 点击右下角“保存”按钮 点击“新对话”(左上角),确定是否正确刷出模型列表,如果正确刷出,则设置完毕。

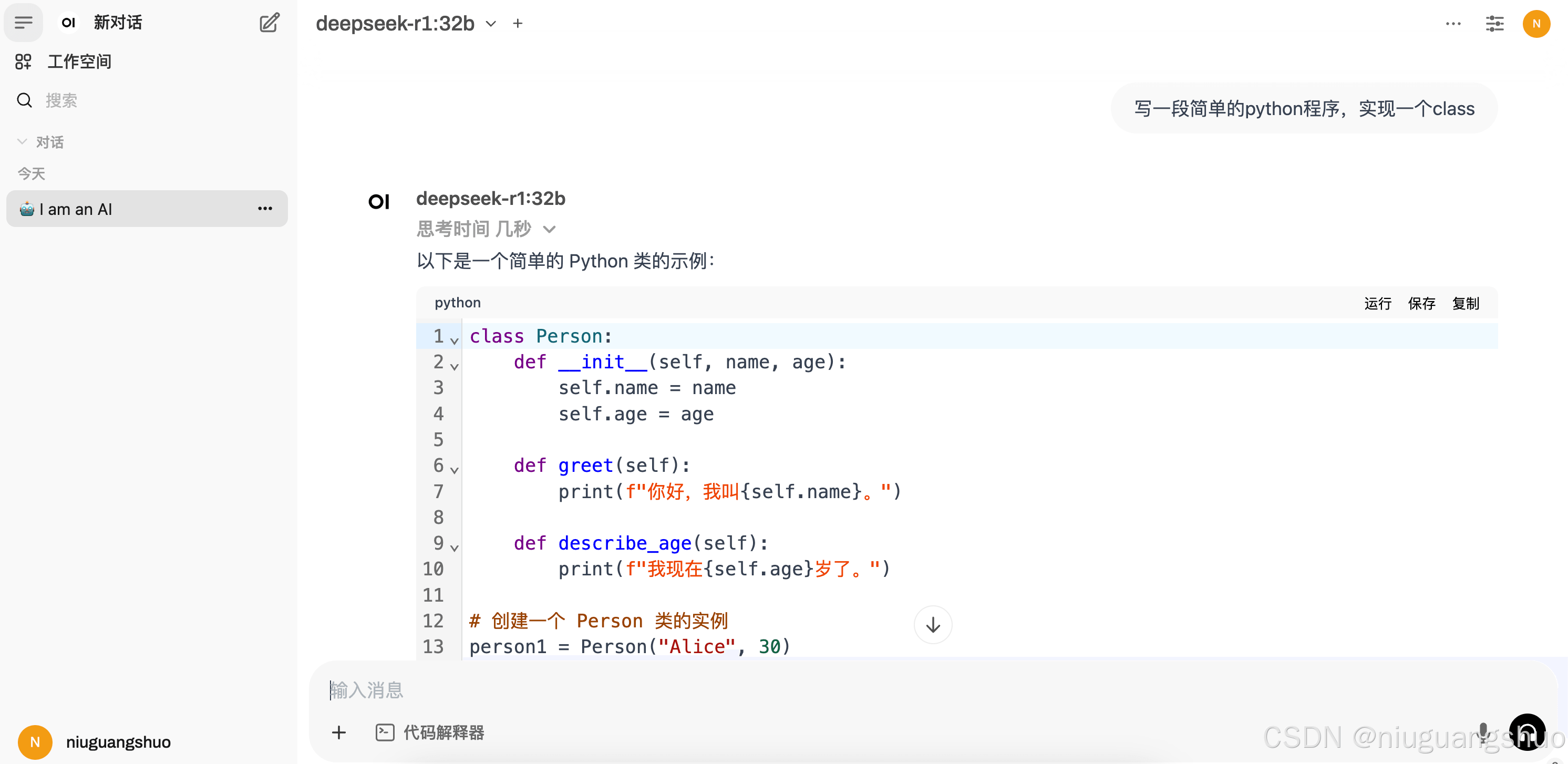

4. 愉快的使用本地deepseek模型

|

您可能感兴趣的文章 :

-

使用Ollama服务监听0.0.0.0地址

一、为什么需要监听0.0.0.0地址? 在计算机网络中,0.0.0.0是一个特殊的IP地址,它表示本机上的所有IPv4地址。 当我们将一个服务配置为监听 -

在linux服务器本地部署Deepseek及在mac远程web-ui访问

1. 在Linux服务器上部署DeepSeek模型 要在 Linux 上通过 Ollama 安装和使用模型,您可以按照以下步骤进行操作: 步骤 1:安装 Ollama 安装 Ollama: -

本地化部署DeepSeek 全攻略(linux、windows、mac系统部

一、Linux 系统部署 准备工作 硬件要求:服务器需具备充足计算资源。推荐使用 NVIDIA GPU,如 A100、V100 等,能加快模型推理速度。内存至少 -

Win10环境借助DockerDesktop部署大数据时序数据库A

Win10环境借助DockerDesktop部署最新版大数据时序数据库Apache Druid32.0.0 前言 大数据分析中,有一种常见的场景,那就是时序数据,简言之,数据 -

VScode内接入deepseek包过程记录

VScode内接入deepseek包过程 在 VSCode 中集成本地部署的 DeepSeek-R1 模型可以显著提升开发效率,尤其是在需要实时访问 AI 模型进行推理任务时 -

deepseek本地部署流程(解决服务器繁忙以及隐私等

由于官网deepseek老是出问题 所以我决定本地部署deepseek 很简单,不需要有什么特殊技能 正文开始 Ollama 首先到这个ollama的官网 goto-Ollama 然后 -

VSCODE内使用Jupyter模式运行backtrader不展示图片、图

一、VSCODE无法展示图片 在Vscode里用jupyter,运行backtrader,使用plot后,图片不展示。 运行代码 # 可视化cerebro.plot() 结果并没有弹出图片,而是 -

完美解决DeepSeek服务器繁忙问题

解决DeepSeek服务器繁忙问题 三:最为推荐 一、用户端即时优化方案 网络加速工具 推荐使用迅游加速器或海豚加速器优化网络路径,缓解因 -

Deepseek R1模型本地化部署+API接口调用详细教程(释

随着最近人工智能 DeepSeek 的爆火,越来越多的技术大佬们开始关注如何在本地部署 DeepSeek,利用其强大的功能,甚至在没有互联网连接的情 -

DeepSeek部署之GPU监控指标接入Prometheus的过程

一、背景 上一篇文章介绍了在GPU主机部署DeepSeek大模型。并且DeepSeek使用到了GPU资源来进行推理和计算的过程,加速我们模型的回答速度。

-

解决Git Bash中文乱码的问题

2022-04-23

-

webp格式图片显示异常分析及解决方案

2023-04-23

-

typescript 实现RabbitMQ死信队列和延迟队

2024-04-08

-

git clone如何解决Permission Denied(publick

2024-11-15

-

Win10环境下编译和运行 x264的详细过程

2022-10-16